Scaling impact measurement with the help of AI

As the organization grows, conducting and especially evaluating participant surveys can become a challenge. In a Data4Good project, 5 CorrelAid volunteers developed an automated evaluation process and a web application for the impact measurement for In safe hands e.V..

In safe hands e.V. offers BUNTER BALL, a sports education prevention program for children of primary school age. The aim is not only to strengthen the children's motor development, but also to improve their emotional and social skills. Standardized interviews are conducted with the participating children before and after each school year in order to verify this effect and make the results measurable. The supporting volunteers record the children's answers in the original wording wherever possible.

In this project, the sections of the interviews dealing with the children's socially competent behavior and emotion regulation strategies were evaluated.

The answers recorded in the original wording must be assigned to various categories from the free text for further evaluation. Previously, this process was carried out manually by trained staff. This became increasingly time-consuming as the number of participants increased, making the evaluation more difficult to carry out. The aim was therefore to simplify and at least partially automate this process.

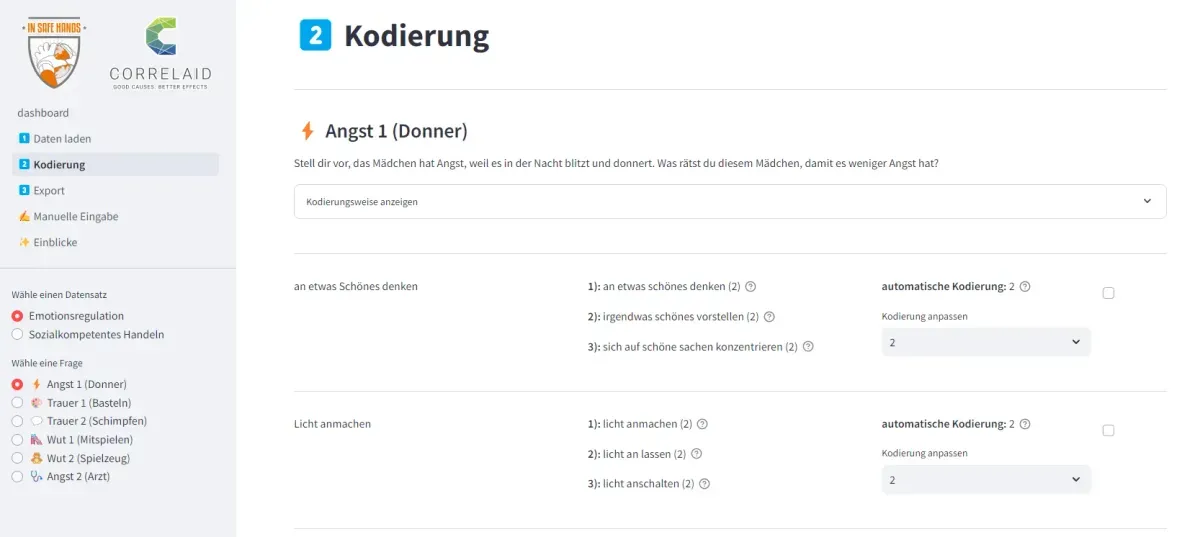

There are 6 questions in each of the two categories (socially competent behavior and emotion regulation). Children are given an example situation and asked what they would recommend a person in this situation to do:

Imagine the girl is scared because there is lightning and thunder in the night. What would you advise this girl to do to make her less afraid?

Examples of answers here would be: “think of something nice” or ”turn on the light”

In these responses, the child shows what is known as an adaptive emotion regulation strategy - it knows how to deal well with the emotion - and receives two points for this in the evaluation. Behavior in which the child devalues itself or reacts aggressively is referred to as maladaptive emotion regulation strategies and coded with 0 points. One point is awarded for other strategies.

Once the project has been implemented, this assignment will be partially automated. Our tool is not intended to replace people in the evaluation process, but to support them. The aim is to save time on simple assignments so that more time remains for processing difficult cases.

The tool supports coding in two steps:

- finding similar statements that have already been coded

The system searches a table of already coded examples for similar statements and their coding to ensure that the same or similar statements always receive the same score.

So-called word vectors or embeddings are used for this assignment, so that the same words do not necessarily have to be used to determine a similarity: For example, for the statement “think of something nice”, the system finds the sentence “concentrate on nice things” in the training data and for the example “turn on the light”, “turn on the light” can also be found. - automatic coding suggestions

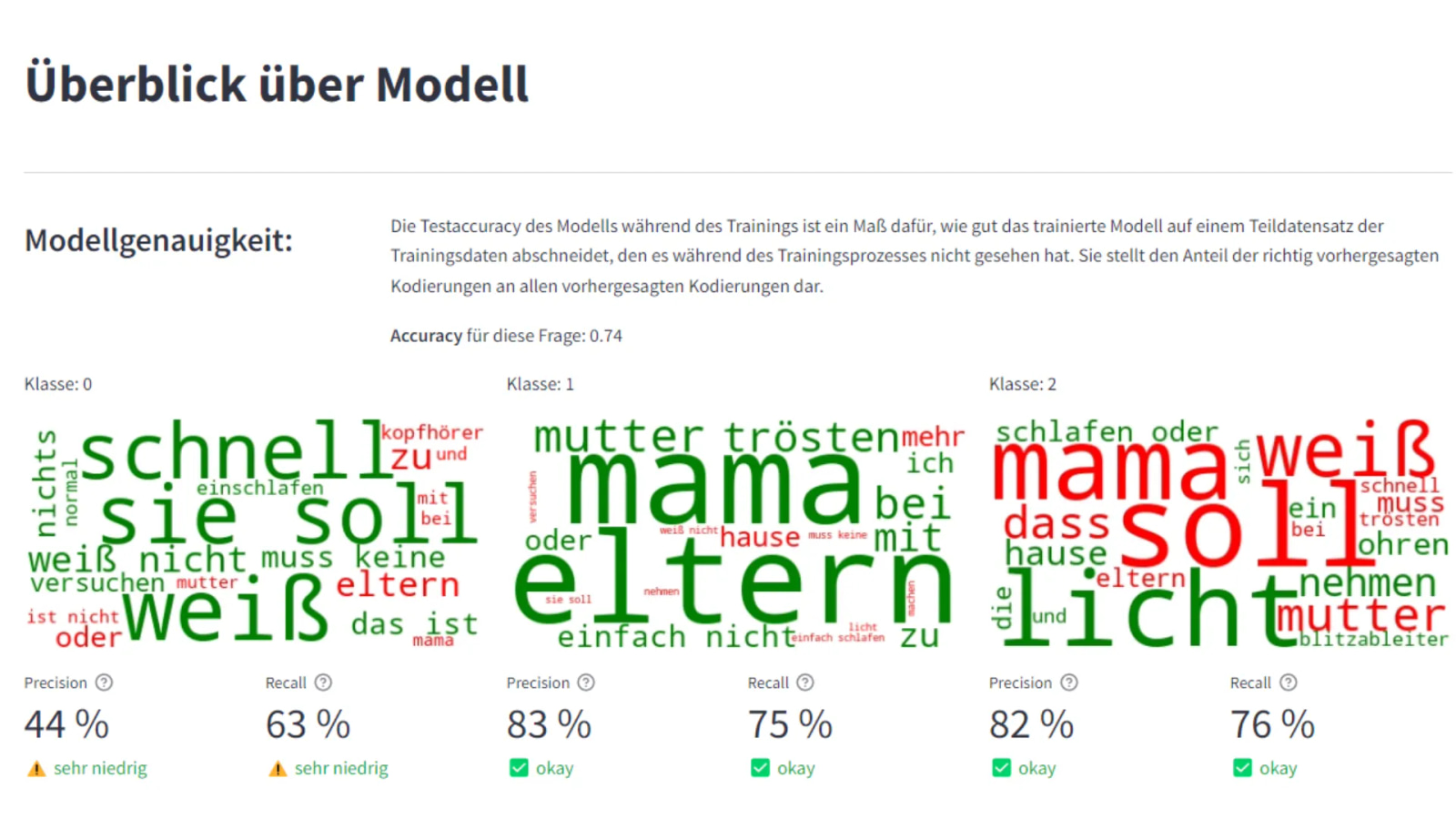

A coding suggestion is also calculated. A simple bag-of-words approach is used here. This is a machine learning approach in which the words occurring in a sentence are counted. Each word can be assigned a weighting for a specific category. The word “light” or “ears” indicates an encoding with two points. While the word combination “don't know” or “nothing” indicates 0 points. Many statements that indicate the involvement of other people and contain the words “mom”, “mother” or “parents”, for example, are coded with one point.

This is a very simple approach, which is particularly characterized by its explainability. It is very easy to understand why the system suggests a certain coding for a statement.

If these two approaches differ from each other and do not provide the same result, the corresponding entry is provided with a warning. A message is also displayed if the system is not sure about the automatic coding, i.e. the confidence value is low. These marked entries can then be checked manually and the coding adjusted if necessary.

What happens next?

The system is currently being used in a first run for coding new responses. The tool was published as a web app for the employees of In safe hands e.V. and is only accessible to an authorized group of people. Of course, there are already many ideas for further developing and improving the tool.

On the one hand, the suggestions can be continually improved through continuous training with data from new surveys. Improving the machine learning algorithms and language models used can also contribute to this. It was important to us that all calculations can take place on our own server and that the data does not have to be sent to an interface from OpenAI or Google, for example. However, the large language models (LLMs) will certainly also develop significantly over the next year and enable simple execution on our own servers without a great deal of computing power.

Another possibility for further development is the further evaluation and visualization of the data. Up to now, our tool has only supported the coding of responses. The data is then provided as an export in an Excel spreadsheet. In the next step, it could also be used to visualize and evaluate the results.

The project has shown that even simple machine learning methods can offer great added value. The evaluation is now much faster and much easier than the manual coding in Excel spreadsheets used to be.

As CorrelAid volunteers, we also learned a lot about strengthening social and emotional skills through sport and were able to pass on our knowledge about data and artificial intelligence. Over a period of six months, this has resulted in a tangible project that does not chase the AI hype, but leads to a real improvement in work processes.

💡 You find the project exciting and would also like to carry out a Data4Good project in your non-profit organization. You can find all the information you need on /en/using-data/projects/